GitHub: https://github.com/xtekky/gpt4free

Last Commit: September 7th, 2024

Written by @xtekky & maintained by @hlohaus

By using this repository or any code related to it, you agree to the legal notice. The author is not responsible for the usage of this repository nor endorses it, nor is the author responsible for any copies, forks, re-uploads made by other users, or anything else related to GPT4Free. This is the author's only account and repository. To prevent impersonation or irresponsible actions, please comply with the GNU GPL license this Repository uses.

[!Warning] > "gpt4free" serves as a PoC (proof of concept), demonstrating the development of an API package with multi-provider requests, with features like timeouts, load balance and flow control.

[!Note] > Lastet version:

Stats:

pip install -U g4fdocker pull hlohaus789/g4f

🆕 What's New

- Added

gpt-4o, simply usegpt-4oinchat.completion.create. - Installation Guide for Windows (.exe): 💻 #installation-guide-for-windows

- Join our Telegram Channel: 📨 telegram.me/g4f_channel

- Join our Discord Group: 💬 discord.gg/XfybzPXPH5

g4fnow supports 100% local inference: 🧠 local-docs

🔻 Site Takedown

Is your site on this repository and you want to take it down? Send an email to takedown@g4f.ai with proof it is yours and it will be removed as fast as possible. To prevent reproduction please secure your API. 😉

🚀 Feedback and Todo

You can always leave some feedback here: https://forms.gle/FeWV9RLEedfdkmFN6

As per the survey, here is a list of improvements to come

- Update the repository to include the new openai library syntax (ex:

Openai()class) | completed, useg4f.client.Client - Golang implementation

- 🚧 Improve Documentation (in /docs & Guides, Howtos, & Do video tutorials)

- Improve the provider status list & updates

- Tutorials on how to reverse sites to write your own wrapper (PoC only ofc)

- Improve the Bing wrapper. (Wait and Retry or reuse conversation)

- 🚧 Write a standard provider performance test to improve the stability

- Potential support and development of local models

- 🚧 Improve compatibility and error handling

📚 Table of Contents

- 🆕 What's New

- 📚 Table of Contents

- 🛠️ Getting Started

- 💡 Usage

- 🚀 Providers and Models

- 🔗 Powered by gpt4free

- 🤝 Contribute

- 🙌 Contributors

- ©️ Copyright

- ⭐ Star History

- 📄 License

🛠️ Getting Started

Docker Container Guide

Getting Started Quickly:

- Install Docker: Begin by downloading and installing Docker.

- Set Up the Container: Use the following commands to pull the latest image and start the container:

docker pull hlohaus789/g4f

docker run \

-p 8080:8080 -p 1337:1337 -p 7900:7900 \

--shm-size="2g" \

-v ${PWD}/har_and_cookies:/app/har_and_cookies \

-v ${PWD}/generated_images:/app/generated_images \

hlohaus789/g4f:latest

- Access the Client:

- To use the included client, navigate to: http://localhost:8080/chat/

- Or set the API base for your client to: http://localhost:1337/v1

- (Optional) Provider Login: If required, you can access the container's desktop here: http://localhost:7900/?autoconnect=1&resize=scale&password=secret for provider login purposes.

Installation Guide for Windows (.exe)

To ensure the seamless operation of our application, please follow the instructions below. These steps are designed to guide you through the installation process on Windows operating systems.

Installation Steps

- Download the Application: Visit our releases page and download the most recent version of the application, named

g4f.exe.zip. - File Placement: After downloading, locate the

.zipfile in your Downloads folder. Unpack it to a directory of your choice on your system, then execute theg4f.exefile to run the app. - Open GUI: The app starts a web server with the GUI. Open your favorite browser and navigate to

http://localhost:8080/chat/to access the application interface. - Firewall Configuration (Hotfix): Upon installation, it may be necessary to adjust your Windows Firewall settings to allow the application to operate correctly. To do this, access your Windows Firewall settings and allow the application.

By following these steps, you should be able to successfully install and run the application on your Windows system. If you encounter any issues during the installation process, please refer to our Issue Tracker or try to get contact over Discord for assistance.

Run the Webview UI on other Platfroms:

Use your smartphone:

Run the Web UI on Your Smartphone:

Use python

Prerequisites:

- Download and install Python (Version 3.10+ is recommended).

- Install Google Chrome for providers with webdriver

Install using PyPI package:

pip install -U g4f[all]

How do I install only parts or do disable parts? Use partial requirements: /docs/requirements

Install from source:

How do I load the project using git and installing the project requirements? Read this tutorial and follow it step by step: /docs/git

Install using Docker:

How do I build and run composer image from source? Use docker-compose: /docs/docker

💡 Usage

Text Generation

from g4f.client import Client

client = Client()

response = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=[{"role": "user", "content": "Hello"}],

...

)

print(response.choices[0].message.content)

Hello! How can I assist you today?

Image Generation

from g4f.client import Client

client = Client()

response = client.images.generate(

model="gemini",

prompt="a white siamese cat",

...

)

image_url = response.data[0].url

Full Documentation for Python API

- New AsyncClient API from G4F: /docs/async_client

- Client API like the OpenAI Python library: /docs/client

- Legacy API with python modules: /docs/legacy

Web UI

To start the web interface, type the following codes in python:

from g4f.gui import run_gui

run_gui()

or execute the following command:

python -m g4f.cli gui -port 8080 -debug

Interference API

You can use the Interference API to serve other OpenAI integrations with G4F.

See docs: /docs/interference

Access with: http://localhost:1337/v1

Configuration

Cookies

Cookies are essential for using Meta AI and Microsoft Designer to create images. Additionally, cookies are required for the Google Gemini and WhiteRabbitNeo Provider. From Bing, ensure you have the "_U" cookie, and from Google, all cookies starting with "__Secure-1PSID" are needed.

You can pass these cookies directly to the create function or set them using the set_cookies method before running G4F:

from g4f.cookies import set_cookies

set_cookies(".bing.com", {

"_U": "cookie value"

})

set_cookies(".google.com", {

"__Secure-1PSID": "cookie value"

})

Using .har and Cookie Files

You can place .har and cookie files in the default ./har_and_cookies directory. To export a cookie file, use the EditThisCookie Extension available on the Chrome Web Store.

Creating .har Files to Capture Cookies

To capture cookies, you can also create .har files. For more details, refer to the next section.

Changing the Cookies Directory and Loading Cookie Files in Python

You can change the cookies directory and load cookie files in your Python environment. To set the cookies directory relative to your Python file, use the following code:

import os.path

from g4f.cookies import set_cookies_dir, read_cookie_files

import g4f.debug

g4f.debug.logging = True

cookies_dir = os.path.join(os.path.dirname(__file__), "har_and_cookies")

set_cookies_dir(cookies_dir)

read_cookie_files(cookies_dir)

Debug Mode

If you enable debug mode, you will see logs similar to the following:

Read .har file: ./har_and_cookies/you.com.har

Cookies added: 10 from .you.com

Read cookie file: ./har_and_cookies/google.json

Cookies added: 16 from .google.com

.HAR File for OpenaiChat Provider

Generating a .HAR File

To utilize the OpenaiChat provider, a .har file is required from https://chatgpt.com/. Follow the steps below to create a valid .har file:

- Navigate to https://chatgpt.com/ using your preferred web browser and log in with your credentials.

- Access the Developer Tools in your browser. This can typically be done by right-clicking the page and selecting "Inspect," or by pressing F12 or Ctrl+Shift+I (Cmd+Option+I on a Mac).

- With the Developer Tools open, switch to the "Network" tab.

- Reload the website to capture the loading process within the Network tab.

- Initiate an action in the chat which can be captured in the .har file.

- Right-click any of the network activities listed and select "Save all as HAR with content" to export the .har file.

Storing the .HAR File

- Place the exported .har file in the

./har_and_cookiesdirectory if you are using Docker. Alternatively, you can store it in any preferred location within your current working directory.

Note: Ensure that your .har file is stored securely, as it may contain sensitive information.

Using Proxy

If you want to hide or change your IP address for the providers, you can set a proxy globally via an environment variable:

- On macOS and Linux:

export G4F_PROXY="http://host:port"

- On Windows:

set G4F_PROXY=http://host:port

🚀 Providers and Models

GPT-4

| Website | Provider | GPT-3.5 | GPT-4 | Stream | Status | Auth |

|---|---|---|---|---|---|---|

| bing.com | g4f.Provider.Bing | ❌ | ✔️ | ✔️ |  | ❌ |

| chatgpt.ai | g4f.Provider.ChatgptAi | ❌ | ✔️ | ✔️ |  | ❌ |

| liaobots.site | g4f.Provider.Liaobots | ✔️ | ✔️ | ✔️ |  | ❌ |

| chatgpt.com | g4f.Provider.OpenaiChat | ✔️ | ✔️ | ✔️ |  | ❌+✔️ |

| raycast.com | g4f.Provider.Raycast | ✔️ | ✔️ | ✔️ |  | ✔️ |

| beta.theb.ai | g4f.Provider.Theb | ✔️ | ✔️ | ✔️ |  | ❌ |

| you.com | g4f.Provider.You | ✔️ | ✔️ | ✔️ |  | ❌ |

Best OpenSource Models

While we wait for gpt-5, here is a list of new models that are at least better than gpt-3.5-turbo. Some are better than gpt-4. Expect this list to grow.

| Website | Provider | parameters | better than |

|---|---|---|---|

| claude-3-opus | g4f.Provider.You | ?B | gpt-4-0125-preview |

| command-r+ | g4f.Provider.HuggingChat | 104B | gpt-4-0314 |

| llama-3-70b | g4f.Provider.Llama or DeepInfra | 70B | gpt-4-0314 |

| claude-3-sonnet | g4f.Provider.You | ?B | gpt-4-0314 |

| reka-core | g4f.Provider.Reka | 21B | gpt-4-vision |

| dbrx-instruct | g4f.Provider.DeepInfra | 132B / 36B active | gpt-3.5-turbo |

| mixtral-8x22b | g4f.Provider.DeepInfra | 176B / 44b active | gpt-3.5-turbo |

GPT-3.5

| Website | Provider | GPT-3.5 | GPT-4 | Stream | Status | Auth |

|---|---|---|---|---|---|---|

| chat3.aiyunos.top | g4f.Provider.AItianhuSpace | ✔️ | ❌ | ✔️ |  | ❌ |

| chat10.aichatos.xyz | g4f.Provider.Aichatos | ✔️ | ❌ | ✔️ |  | ❌ |

| chatforai.store | g4f.Provider.ChatForAi | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgpt4online.org | g4f.Provider.Chatgpt4Online | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgpt-free.cc | g4f.Provider.ChatgptNext | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgptx.de | g4f.Provider.ChatgptX | ✔️ | ❌ | ✔️ |  | ❌ |

| duckduckgo.com | g4f.Provider.DDG | ✔️ | ❌ | ✔️ |  | ❌ |

| feedough.com | g4f.Provider.Feedough | ✔️ | ❌ | ✔️ |  | ❌ |

| flowgpt.com | g4f.Provider.FlowGpt | ✔️ | ❌ | ✔️ |  | ❌ |

| freegptsnav.aifree.site | g4f.Provider.FreeGpt | ✔️ | ❌ | ✔️ |  | ❌ |

| gpttalk.ru | g4f.Provider.GptTalkRu | ✔️ | ❌ | ✔️ |  | ❌ |

| koala.sh | g4f.Provider.Koala | ✔️ | ❌ | ✔️ |  | ❌ |

| app.myshell.ai | g4f.Provider.MyShell | ✔️ | ❌ | ✔️ |  | ❌ |

| perplexity.ai | g4f.Provider.PerplexityAi | ✔️ | ❌ | ✔️ |  | ❌ |

| poe.com | g4f.Provider.Poe | ✔️ | ❌ | ✔️ |  | ✔️ |

| talkai.info | g4f.Provider.TalkAi | ✔️ | ❌ | ✔️ |  | ❌ |

| chat.vercel.ai | g4f.Provider.Vercel | ✔️ | ❌ | ✔️ |  | ❌ |

| aitianhu.com | g4f.Provider.AItianhu | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgpt.bestim.org | g4f.Provider.Bestim | ✔️ | ❌ | ✔️ |  | ❌ |

| chatbase.co | g4f.Provider.ChatBase | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgptdemo.info | g4f.Provider.ChatgptDemo | ✔️ | ❌ | ✔️ |  | ❌ |

| chat.chatgptdemo.ai | g4f.Provider.ChatgptDemoAi | ✔️ | ❌ | ✔️ |  | ❌ |

| chatgptfree.ai | g4f.Provider.ChatgptFree | ✔️ | ❌ | ❌ |  | ❌ |

| chatgptlogin.ai | g4f.Provider.ChatgptLogin | ✔️ | ❌ | ✔️ |  | ❌ |

| chat.3211000.xyz | g4f.Provider.Chatxyz | ✔️ | ❌ | ✔️ |  | ❌ |

| gpt6.ai | g4f.Provider.Gpt6 | ✔️ | ❌ | ✔️ |  | ❌ |

| gptchatly.com | g4f.Provider.GptChatly | ✔️ | ❌ | ❌ |  | ❌ |

| ai18.gptforlove.com | g4f.Provider.GptForLove | ✔️ | ❌ | ✔️ |  | ❌ |

| gptgo.ai | g4f.Provider.GptGo | ✔️ | ❌ | ✔️ |  | ❌ |

| gptgod.site | g4f.Provider.GptGod | ✔️ | ❌ | ✔️ |  | ❌ |

| onlinegpt.org | g4f.Provider.OnlineGpt | ✔️ | ❌ | ✔️ |  | ❌ |

Other

| Website | Provider | Stream | Status | Auth |

|---|---|---|---|---|

| openchat.team | g4f.Provider.Aura | ✔️ |  | ❌ |

| blackbox.ai | g4f.Provider.Blackbox | ✔️ |  | ❌ |

| cohereforai-c4ai-command-r-plus.hf.space | g4f.Provider.Cohere | ✔️ |  | ❌ |

| deepinfra.com | g4f.Provider.DeepInfra | ✔️ |  | ❌ |

| free.chatgpt.org.uk | g4f.Provider.FreeChatgpt | ✔️ |  | ❌ |

| gemini.google.com | g4f.Provider.Gemini | ✔️ |  | ✔️ |

| ai.google.dev | g4f.Provider.GeminiPro | ✔️ |  | ✔️ |

| gemini-chatbot-sigma.vercel.app | g4f.Provider.GeminiProChat | ✔️ |  | ❌ |

| developers.sber.ru | g4f.Provider.GigaChat | ✔️ |  | ✔️ |

| console.groq.com | g4f.Provider.Groq | ✔️ |  | ✔️ |

| huggingface.co | g4f.Provider.HuggingChat | ✔️ |  | ❌ |

| huggingface.co | g4f.Provider.HuggingFace | ✔️ |  | ❌ |

| llama2.ai | g4f.Provider.Llama | ✔️ |  | ❌ |

| meta.ai | g4f.Provider.MetaAI | ✔️ |  | ❌ |

| openrouter.ai | g4f.Provider.OpenRouter | ✔️ |  | ✔️ |

| labs.perplexity.ai | g4f.Provider.PerplexityLabs | ✔️ |  | ❌ |

| pi.ai | g4f.Provider.Pi | ✔️ |  | ❌ |

| replicate.com | g4f.Provider.Replicate | ✔️ |  | ❌ |

| theb.ai | g4f.Provider.ThebApi | ✔️ |  | ✔️ |

| whiterabbitneo.com | g4f.Provider.WhiteRabbitNeo | ✔️ |  | ✔️ |

| bard.google.com | g4f.Provider.Bard | ❌ |  | ✔️ |

Models

| Model | Base Provider | Provider | Website |

|---|---|---|---|

| gpt-3.5-turbo | OpenAI | 8+ Providers | openai.com |

| gpt-4 | OpenAI | 2+ Providers | openai.com |

| gpt-4-turbo | OpenAI | g4f.Provider.Bing | openai.com |

| Llama-2-7b-chat-hf | Meta | 2+ Providers | llama.meta.com |

| Llama-2-13b-chat-hf | Meta | 2+ Providers | llama.meta.com |

| Llama-2-70b-chat-hf | Meta | 3+ Providers | llama.meta.com |

| Meta-Llama-3-8b-instruct | Meta | 1+ Providers | llama.meta.com |

| Meta-Llama-3-70b-instruct | Meta | 2+ Providers | llama.meta.com |

| CodeLlama-34b-Instruct-hf | Meta | g4f.Provider.HuggingChat | llama.meta.com |

| CodeLlama-70b-Instruct-hf | Meta | 2+ Providers | llama.meta.com |

| Mixtral-8x7B-Instruct-v0.1 | Huggingface | 4+ Providers | huggingface.co |

| Mistral-7B-Instruct-v0.1 | Huggingface | 3+ Providers | huggingface.co |

| Mistral-7B-Instruct-v0.2 | Huggingface | g4f.Provider.DeepInfra | huggingface.co |

| zephyr-orpo-141b-A35b-v0.1 | Huggingface | 2+ Providers | huggingface.co |

| dolphin-2.6-mixtral-8x7b | Huggingface | g4f.Provider.DeepInfra | huggingface.co |

| gemini | g4f.Provider.Gemini | gemini.google.com | |

| gemini-pro | 2+ Providers | gemini.google.com | |

| claude-v2 | Anthropic | 1+ Providers | anthropic.com |

| claude-3-opus | Anthropic | g4f.Provider.You | anthropic.com |

| claude-3-sonnet | Anthropic | g4f.Provider.You | anthropic.com |

| lzlv_70b_fp16_hf | Huggingface | g4f.Provider.DeepInfra | huggingface.co |

| airoboros-70b | Huggingface | g4f.Provider.DeepInfra | huggingface.co |

| openchat_3.5 | Huggingface | 2+ Providers | huggingface.co |

| pi | Inflection | g4f.Provider.Pi | inflection.ai |

Image and Vision Models

| Label | Provider | Image Model | Vision Model | Website |

|---|---|---|---|---|

| Microsoft Copilot in Bing | g4f.Provider.Bing | dall-e-3 | gpt-4-vision | bing.com |

| DeepInfra | g4f.Provider.DeepInfra | stability-ai/sdxl | llava-1.5-7b-hf | deepinfra.com |

| Gemini | g4f.Provider.Gemini | ✔️ | ✔️ | gemini.google.com |

| Gemini API | g4f.Provider.GeminiPro | ❌ | gemini-1.5-pro | ai.google.dev |

| Meta AI | g4f.Provider.MetaAI | ✔️ | ❌ | meta.ai |

| OpenAI ChatGPT | g4f.Provider.OpenaiChat | dall-e-3 | gpt-4-vision | chatgpt.com |

| Reka | g4f.Provider.Reka | ❌ | ✔️ | chat.reka.ai |

| Replicate | g4f.Provider.Replicate | stability-ai/sdxl | llava-v1.6-34b | replicate.com |

| You.com | g4f.Provider.You | dall-e-3 | ✔️ | you.com |

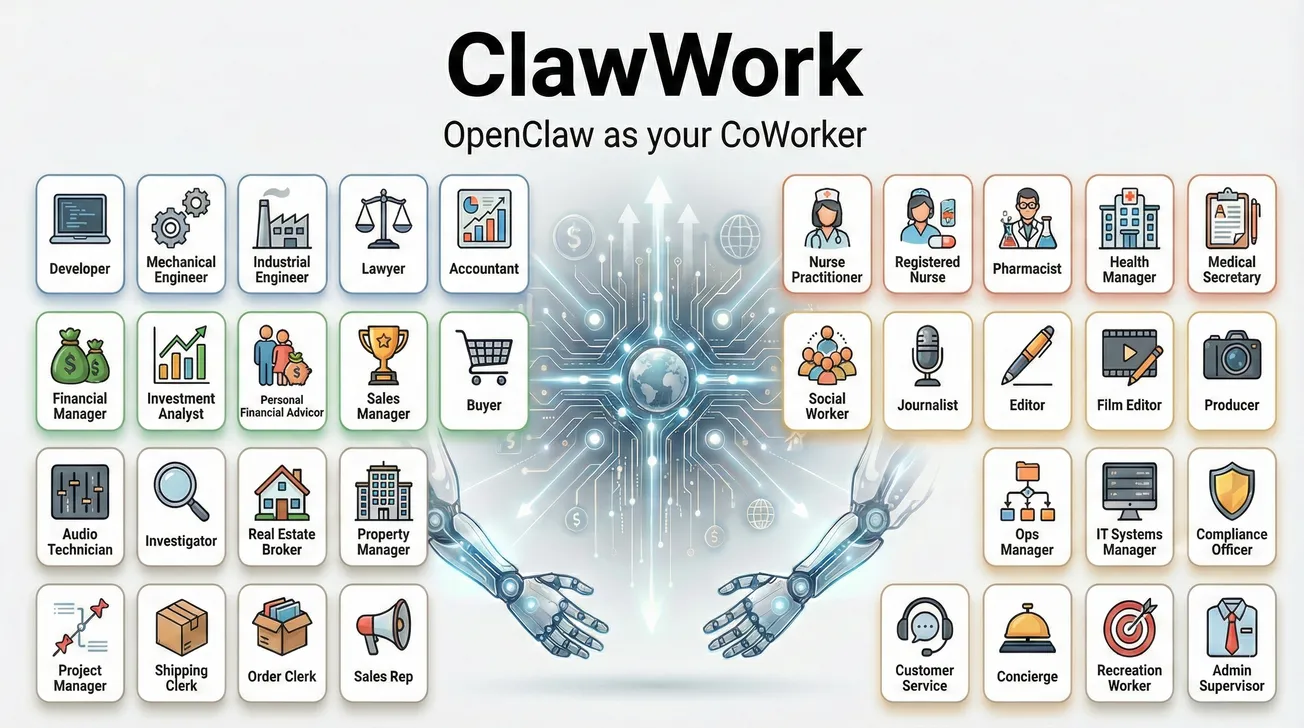

🔗 Powered by gpt4free

🤝 Contribute

We welcome contributions from the community. Whether you're adding new providers or features, or simply fixing typos and making small improvements, your input is valued. Creating a pull request is all it takes – our co-pilot will handle the code review process. Once all changes have been addressed, we'll merge the pull request into the main branch and release the updates at a later time.

Guide: How do i create a new Provider?

Guide: How can AI help me with writing code?

- Read: /docs/guides/help_me